Introduction

Every time you type a search query or ask Google Assistant a question, you are interacting with some of the most advanced artificial intelligence ever built. But how does Google AI work, exactly? What processes run beneath the surface of that simple white search box?

Google has quietly become one of the most AI-driven companies in the world. Its systems power billions of searches per day, understand spoken language, predict what you want to type, and even diagnose diseases. In this guide, we break down exactly how Google AI works — from its search algorithm to its conversational assistant.

Google’s AI Journey: A Quick History

Google has been investing in artificial intelligence since its earliest days, but the pace accelerated dramatically in the 2010s. Key milestones include:

- 2015 — RankBrain launched, Google’s first machine learning search algorithm

- 2018 — BERT introduced, transforming how Google understands natural language

- 2019 — Google Assistant improved with Duplex, making real phone calls

- 2021 — MUM (Multitask Unified Model) launched, 1000x more powerful than BERT

- 2023 — Google Bard (now Gemini) launched to compete with ChatGPT

- 2024–2026 — Gemini Ultra integrated across Google Search, Workspace, and Android

How Does Google AI Work in Search?

RankBrain: The First AI Brain of Google Search

RankBrain was Google’s first AI-based search component. It uses machine learning to interpret ambiguous or never-before-seen queries.

Before RankBrain, Google used exact keyword matching. If your query didn’t match stored patterns, results were poor. RankBrain learned to associate unfamiliar queries with similar, known ones — dramatically improving relevance.

BERT: Understanding Context, Not Just Keywords

BERT (Bidirectional Encoder Representations from Transformers) was a game changer. It allowed Google to understand the context of words in a sentence, not just the words individually.

For example, the word “bank” means something different in:

- “I sat by the river bank”

- “I deposited money at the bank”

BERT reads the sentence in both directions simultaneously to understand which meaning applies. This improved the accuracy of about 10% of all search queries when it launched.

MUM: Google’s Most Powerful Search AI

MUM (Multitask Unified Model) is trained on 75 languages simultaneously and can understand text, images, and video together. It was designed to handle complex, multi-part questions.

For example, instead of doing 8 separate searches to plan a hiking trip, MUM can process the entire question — “I’ve hiked Mt. Adams, what should I prepare differently for Mt. Fuji?” — and return a comprehensive answer in one step.

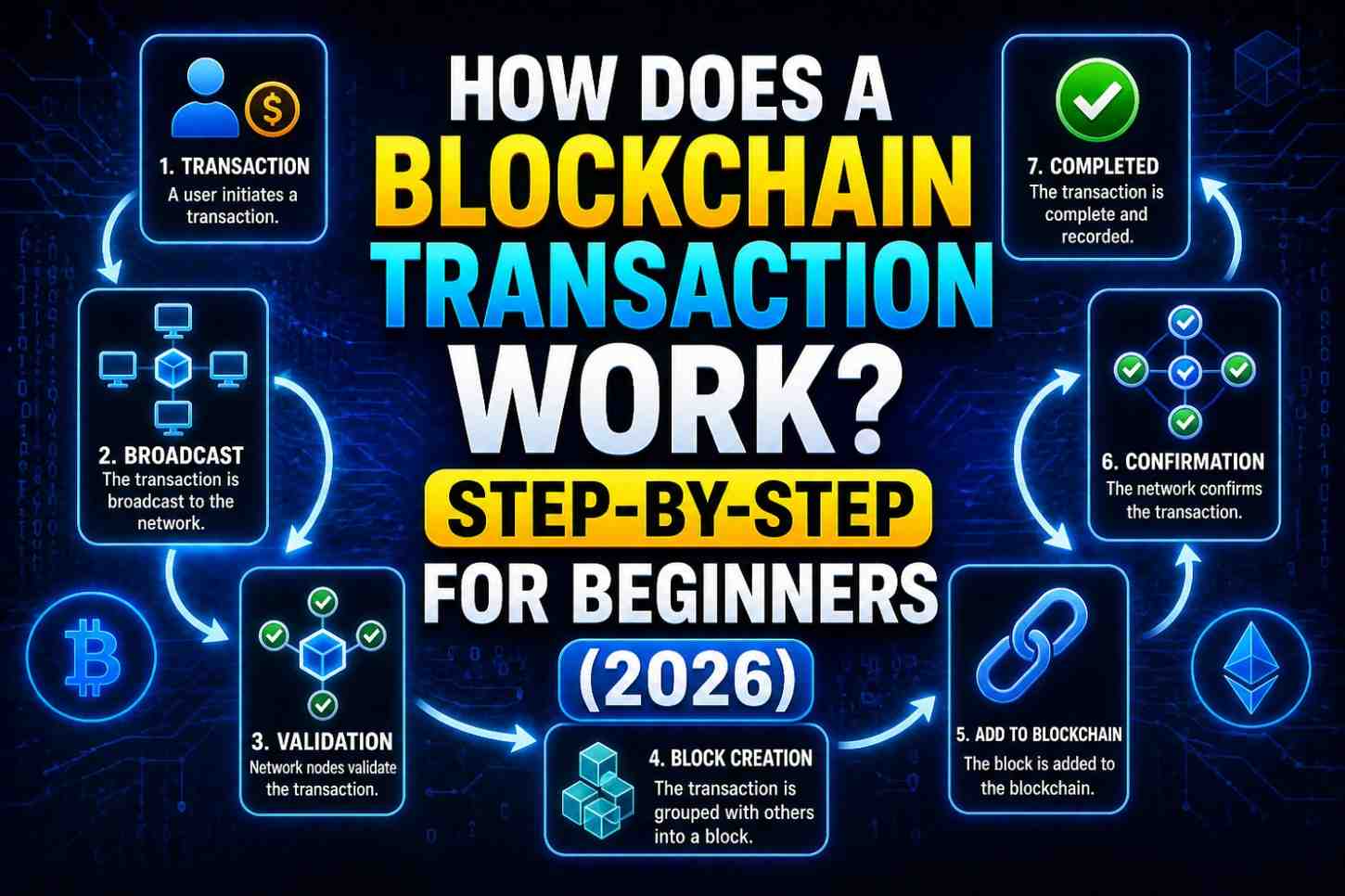

Also Read- How Does a Blockchain Transaction Work? Step-by-Step for Beginners (2026)

How Does Google AI Work in Google Assistant?

Google Assistant relies on several interconnected AI systems:

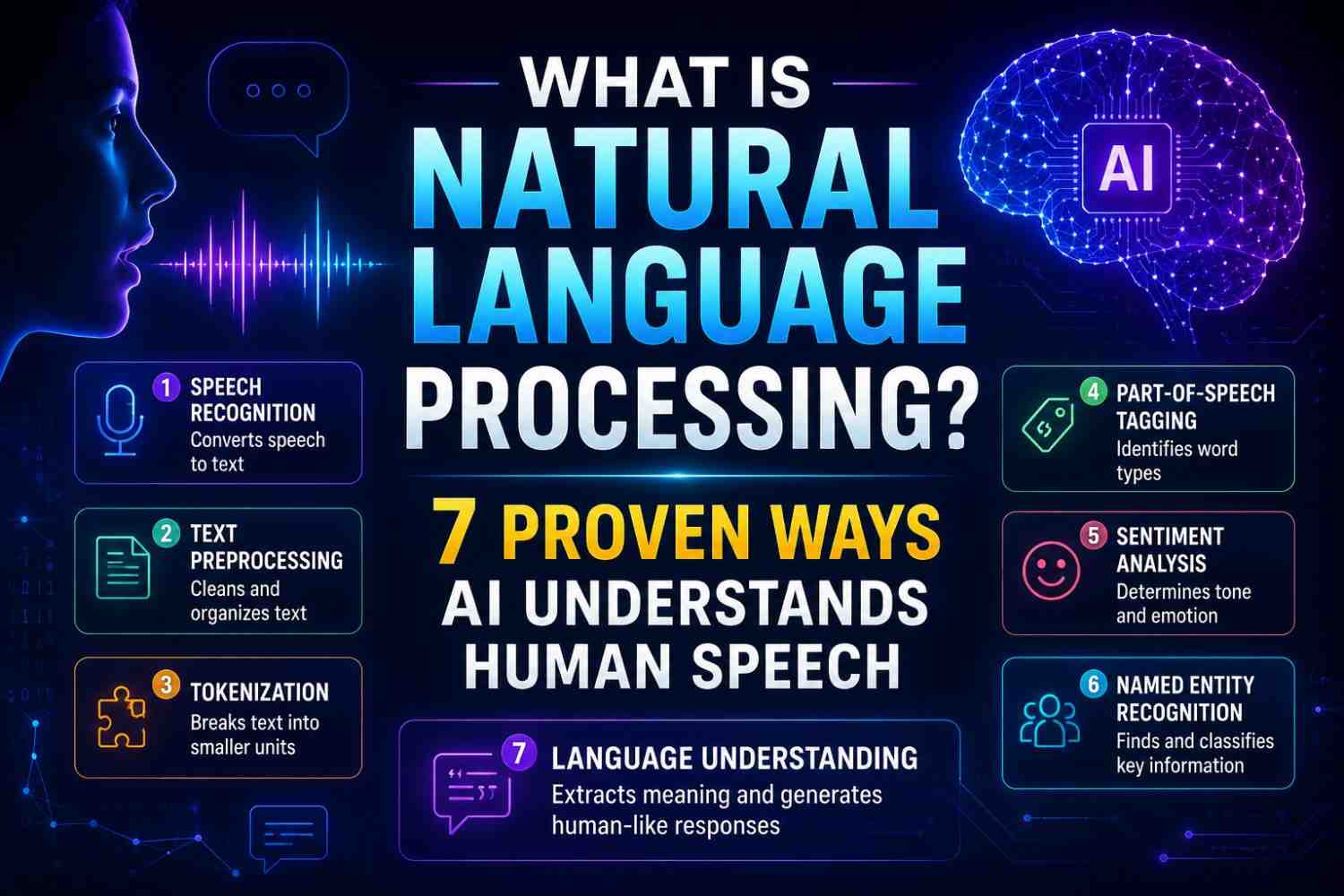

Natural Language Understanding (NLU)

When you say “Hey Google, set a reminder for my dentist appointment tomorrow at 3pm,” the assistant must:

- Detect the wake word using an always-on audio model

- Transcribe your speech using automatic speech recognition (ASR)

- Understand intent — you want to create a reminder

- Extract entities — time (3pm), date (tomorrow), subject (dentist appointment)

- Execute the action — access your calendar and create the event

Each of these steps involves a separate specialized AI model working in milliseconds.

Neural Networks in Voice Recognition

Google’s voice recognition uses deep neural networks (DNNs) trained on thousands of hours of human speech. These networks learn accents, dialects, speaking speeds, and background noise patterns.

Google’s ASR systems achieve error rates below 5% in clean audio conditions — comparable to human accuracy.

Google Gemini: The New Era of Google AI

In 2023, Google launched Gemini, its most capable AI model family. In 2024–2026, Gemini became deeply embedded in:

- Google Search — AI Overviews (formerly SGE) that summarize answers directly in search results

- Google Workspace — writing, summarizing, and analyzing in Gmail and Docs

- Android — on-device AI features without internet connectivity

- Google Lens — visual search and real-world object identification

Gemini Ultra — the most powerful version — is a multimodal model, meaning it processes text, images, audio, and video simultaneously.

How Google AI Personalizes Your Experience

One often-overlooked aspect of how does Google AI work is personalization. Google uses AI to tailor results based on:

- Your search history

- Your location

- Your device type

- Your time of day

- Your past click behavior

This means two people searching the same query may get different results. A user in Mumbai searching “best restaurants” sees results near Mumbai. A regular sports fan searching “the game” gets sports results rather than board game links.

Google AI and Privacy: What You Should Know

Google’s AI systems collect and process enormous amounts of user data to function effectively. This has raised significant privacy concerns:

- Google stores your search history unless you delete it

- Assistant conversations may be reviewed by human raters for quality improvement

- Personalization profiles are built from your activity across Search, YouTube, Gmail, and Maps

Users can manage these settings through Google’s My Activity dashboard and by using Incognito Mode to limit data collection.

Also Read-How Does AI Work? The Best Beginner’s Guide to Artificial Intelligence in 2026

Comparison: Google AI vs. Other AI Assistants

| Feature | Google AI | ChatGPT | Apple Siri |

|---|---|---|---|

| Search Integration | Native | Bing-powered | Limited |

| Multimodal | Yes (Gemini) | Yes (GPT-4o) | Limited |

| Real-time Info | Yes | Yes (with search) | Partial |

| Privacy Focus | Moderate | Moderate | High |

| Device Integration | Android/Chrome | Cross-platform | Apple ecosystem |

FAQs: How Does Google AI Work

Q1: What AI model does Google Search use? Google Search uses a combination of RankBrain, BERT, MUM, and Gemini-based AI systems working together.

Q2: How does Google AI understand voice commands? It uses automatic speech recognition (ASR) followed by natural language understanding (NLU) to detect intent and extract entities.

Q3: Is Google Assistant the same as Gemini? As of 2024–2026, Google is transitioning Google Assistant to Gemini across Android devices.

Q4: Does Google AI learn from my searches? Yes. Google uses your search history and behavior to personalize results, though you can opt out in account settings.

Q5: How accurate is Google’s AI search? For most informational queries, Google achieves very high relevance. Complex or ambiguous queries benefit most from MUM.

Q6: What is Google AI Overviews? AI Overviews are AI-generated summaries that appear at the top of search results, powered by Gemini.

Conclusion

Now you know exactly how does Google AI work — from the deep neural networks processing your voice to the transformer models ranking your search results. Google’s AI is not one single system; it is an interconnected ecosystem of specialized models working in perfect harmony. To stay ahead in SEO and digital marketing in 2026, understanding these systems is no longer optional — it is essential. Learn more about Google’s AI research at Google DeepMind.